So we will spend $25 per code review now?

No you should not! And you need to change how to do code reviews

Claude Code Review

Unless you’ve been living under a rock... which is unlikely given you’re out here on niche Twitter reading articles written by me, you’ve heard non-stop coverage about Claude releasing “Claude Code Reviews”

The most incredulous thing about it is that they mention your cost for every code review will come out to be between $15 to $25 (!!!!) per review.

There are some incredible explanations being given like test-time compute (i.e. the more time the agent spends the more mistakes it finds), and that the reviews are done by multiple agents blah blah, but just hold on for a second. Let’s just take a cursory glance at the rest for the market for a second?

There is @greptile, @coderabbitai, @QodoAI, @cubic_dev_ and many more, apart from @GitHubCopilot‘s own code review. Literally all of them will come out to cost $0.50-ish per code review, and even for very large diffs, maybe $1~$1.5 per review - basically at least 10x or more cheaper than what Claude is claiming the price for each code review will be. On top of that almost all of them also have a generous free tier for open source projects.

In fact here is a list of links of some recent PRs in my personal side projects where I have gotten code reviewed by @QodoAI and@GitHubCopilot

All of them do a fairly great job - more than what I would have expected from myself if I were reviewing these PRs manually. They have noticed some mistakes that even I would have not easily noticed if I manually reviewed these PRs.

But wait, forget dedicated code-review apps for a second. In most coding agents (including Claude Code), you can just use the agent which you were using for writing the code itself to also forget all the context (become a fresh pair of eyes), and review the diff before even pushing to GitHub for a PR!

You can just add a `/review` skill that you run before creating PRs, or even add some lines in your system prompt that tells the agent to spawn up a new agent to do code review of the diff as the final step of every coding task. And I have never had the experience (however large the diff is) that running a code review via Claude Code or Codex made token cost be every above $3 for a review.

However many agents Claude spins up and however good the quality of their review is - it is just not worth spending $15 per review on it, when you can just automate running Claude Code itself with a “please review this” prompt on every PR created. And it will, for sure, cost less.

I do understand the motivation behind Claude releasing this product. After Claude Code being adopted like there is no tomorrow across organisations, I am sure Anthropic is finding exactly what I have been finding at work too - the major bottleneck today (the one on which leadership/execs are ready to burn their next SaaS procurement $$$ on - is review velocity), because there is already sunk cost in AI tools to generate code. And the historical bottleneck at the stage where software engineers convert “thought into code” has suddenly now evaporated. So the next bottleneck, the one that is stopping all the $ xxxK or $ xM of AI spend from magically converting into $xxM of ARR growth for every company, is the “slow review” issue. That’s what all engineers have been telling their leadership/execs for the last many weeks.

So when the sales team and the FDEs of Anthropic went to every million-dollar account and asked their CIO/CTO what is one thing for which they are ready to pay big $$$ for to “increase employee” productivity or whatever - I am sure all of them shouted out code reviews in unison. Hence, we are where we are.

Agentic Code Review Environments

All that said, I think we all are doing ‘agentic code reviews’ utterly wrong. Ok not entirely wrong, more like incomplete/inadequate rather than wrong.

You see, even before the AI era, when code was being written manually, even then, code reviews used to evaluate the ‘diff’ (pull request), at two different levels. One about just the the diff itself, and one in the broader context of what this change is trying to do for the larger system.

Yes the reviewer was looking at the level of the code itself. And they would make some comments like “instead of implementing this class twice separately, let’s make a common interface?” or comment on obvious security issues like variable being accessible from places it should not be and all that. But apart from the level of the design of code, and catching egregious coding bugs itself, a reviewer when reviewing the code would also make a mental model of the entire codebase, and reviews things from a higher level of abstraction too!

For example, I have often opened a PR review / diff review with some specific questions in mind already that I wanted to ascertain; like

now that we are adding 2 providers, are we making a common provider interface they both implement?

since we are adding this feature in UI that already exists in the CLI, should we not add the entire code here, and add a common implementation in the other repo (the core library) and just call it here?

Something like case (1) can even be ascertained just by looking at the `.diff` or `.patch` in isolation. Something like case (2), cannot be ascertained by an AI code reviewer which is only looking at the PR raised against the UI app, and doesn’t have access to the repo of the CLI or the core library (sure they could be in a monorepo instead, but you get my point - not all context for the review is necessarily available in the current diff itself)

So if the person generating the PR/diff is generating code using AI that read off material from outside the codebase (the prompt, the research the agent would have done, the docs it would have read, the RFCs etc it was fed), then for sure the person reviewing the PR also needs to have all that context? Right?

And if the person generating the code is scaling up using AI to write ever more and more code, the review side needs to scale up in the same manner, rather than just replace human reviews with AI reviews.

In fact, there are often PRs where you specifically tag an individual because you want specifically want them to look at it, and not just any other engineer in the team. In fact sometimes you tag multiple individuals, because you want all of them to review it with their specific perspective in which they evaluate the change, and you want all of their reviews before merging the code. The reason you do it because you want the code be reviewed no just as a collection of +++ and --- lines of addition and removals but with their specific context of something only they know, which adds value to reviewing the change you’re trying to bring.

What the current crop of AI reviews do is just provide static code analysis (codeQL etc) on steroids. See, that’s the whole meaning of the word static in static code reviews. That we are just reviewing the ‘code’ in its static state without running it or without putting it in the broader context of the rest of the systems. Human reviewers do not do static code reviews, their purpose is different.

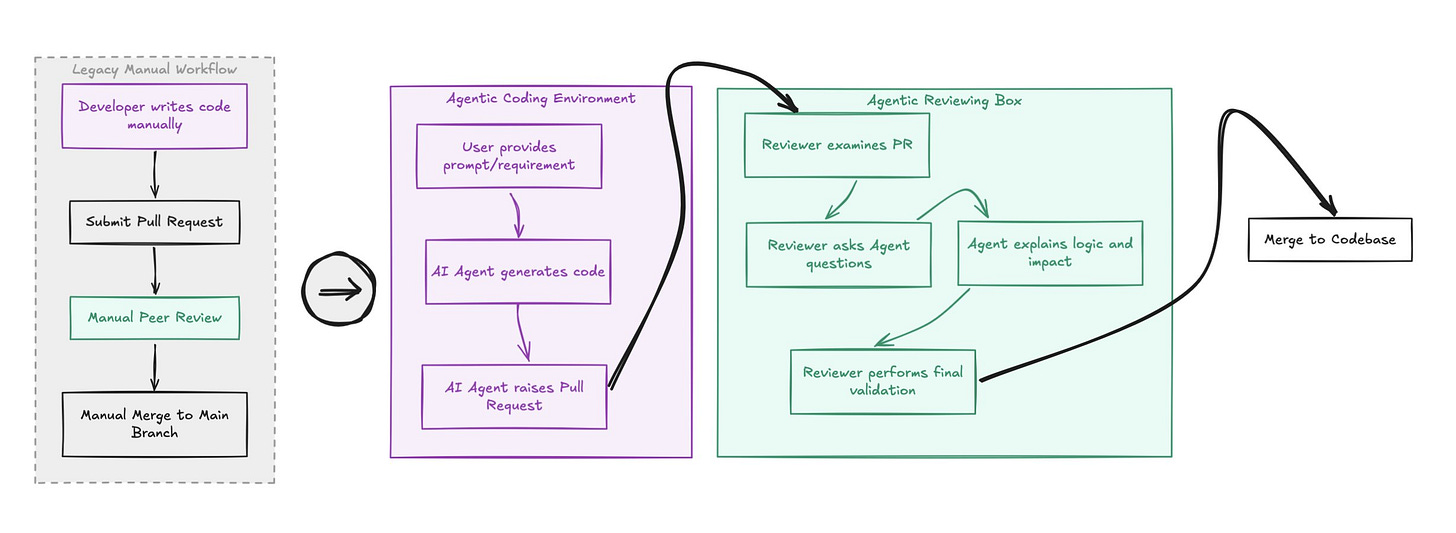

So yes, not only is Claude Code Review not worth it at $25 or even $15, compared to its competitors, it is not even what you actually want to tackle the deluge of pull-requests at work. You solved coding velocity by giving coders an agentic environment to ‘generate’ code. You will solve review velocity by giving reviews an agentic environment to generate reviews, based on their questions, the things they want to ascertain in the diff and their specific context.

For static, drive-by PR reviews which just check if the current diff, in and of itself, is good or not, the existing players - @greptile, @QodoAI, @coderabbitai and even just @GitHubCopilot review are pretty good! Adding a skill/hook in your agentic harness to do a `/review` before raising a PR is also something you should bake into your workflow today itself. But none of that will do what the purpose of code reviews were supposed to be.

Yep. Human code review is a bottleneck because AI is not a good steward of the product.

As much as Anthropic may want to dream, you can’t suddenly use AI to do things that AI cannot do.